AI and the Costs of Doing Social Science

Artificial intelligence will change how social science is done. That much seems clear. The real question is how (and how much).

There has been a lot of debate on this already.

If AI can reliably help with writing and executing analysis code, then a lot of the friction in empirical work drops away. More people can prototype quickly, test ideas they would never have had time to test, and turn rough questions into working analyses. That is exciting, and it is also unsettling, because it changes what counts as being skilled.

Similarly, when the fixed cost of moving a project forward collapses, we can push many more ideas ahead, but we still do not have unlimited time to check, interpret, and scrutinize results. The bottleneck shifts toward human verification and judgment, and the temptation is to treat plausible output as correct output.

Yet these cost-centric perspectives capture only part of the story. The deeper shift may lie elsewhere. AI will not only change how fast research can be produced. It may change what counts as research output in the first place.

Can’t Believe it’s not Papers!

For a long time the central artifact of social science has been the article as the paradigmatic unit of knowledge. Evidence appears in tables and figures, but the primary medium is text. The cost of producing good text has, of course, dropped significantly.

However, artificial intelligence and other computational tools have also made it far more accessible to produce other types of scientific outputs that are better able to communicate ideas and insights.

Dashboards, simulations, interactive visualizations, and small research tools can allow readers (academic or otherwise) to explore evidence directly rather than simply reading a description of it.

These artifacts can function as a form of proof-of-concept. Instead of arguing that a particular mechanism might exist, a researcher can build a small system that demonstrates how it could work (or not!).

Social science has rarely treated these outputs as central scholarly contributions. The proliferation of artificial intelligence may begin to change this.

Won’t somebody please think of the children?!

Tasks that once required substantial programming expertise are becoming easier.

A few years ago creating a complex dashboard could require days of work. Now the same can be done in a few hours. AI tools can do most of the debugging, code generation, and even testing.

Yet to make the most of these tools, researchers must have at least a cursory understanding of what happens under the hood. Meaningful collaboration with AI systems requires some familiarity with data structures, computational logic, and reproducible workflows.

This raises a broader question about academic training: what should future social scientists learn?

Today we expect even the most qualitative-minded students to have at least some familiarity with mystical terms like “correlation”, “p-value”, and “coefficient”.

In a few years another esoteric vocabulary will surely creep into undergraduate syllabi galore: “json”, “terminal”, “git”.

This does not mean that we are going to be producing an army of computer scientists any more than we currently produce one of statisticians. But we recognize that students need to know what a regression table is in order to engage with current research in a meaningful way.

make_pretty()

One of the world’s most cited papers boldly tells us that “Attention is All you Need!”. But we also need good judgment, and arguably good taste. We know when something was written entirely by an LLM, and we tend to dislike it.

I have heard many students and colleagues despair about what humans will do in a future where we cannot outproduce AI.

But do we really want to compete with AI? It is already amazing at building things, but we are absolutely fantastic at breaking them. Smashing things is (presumably) a lot more fun!

More importantly, by picking things apart and putting them back together we learn and we innovate. Humans are the ones who know what humans want, and one of our drives is to make beautiful things.

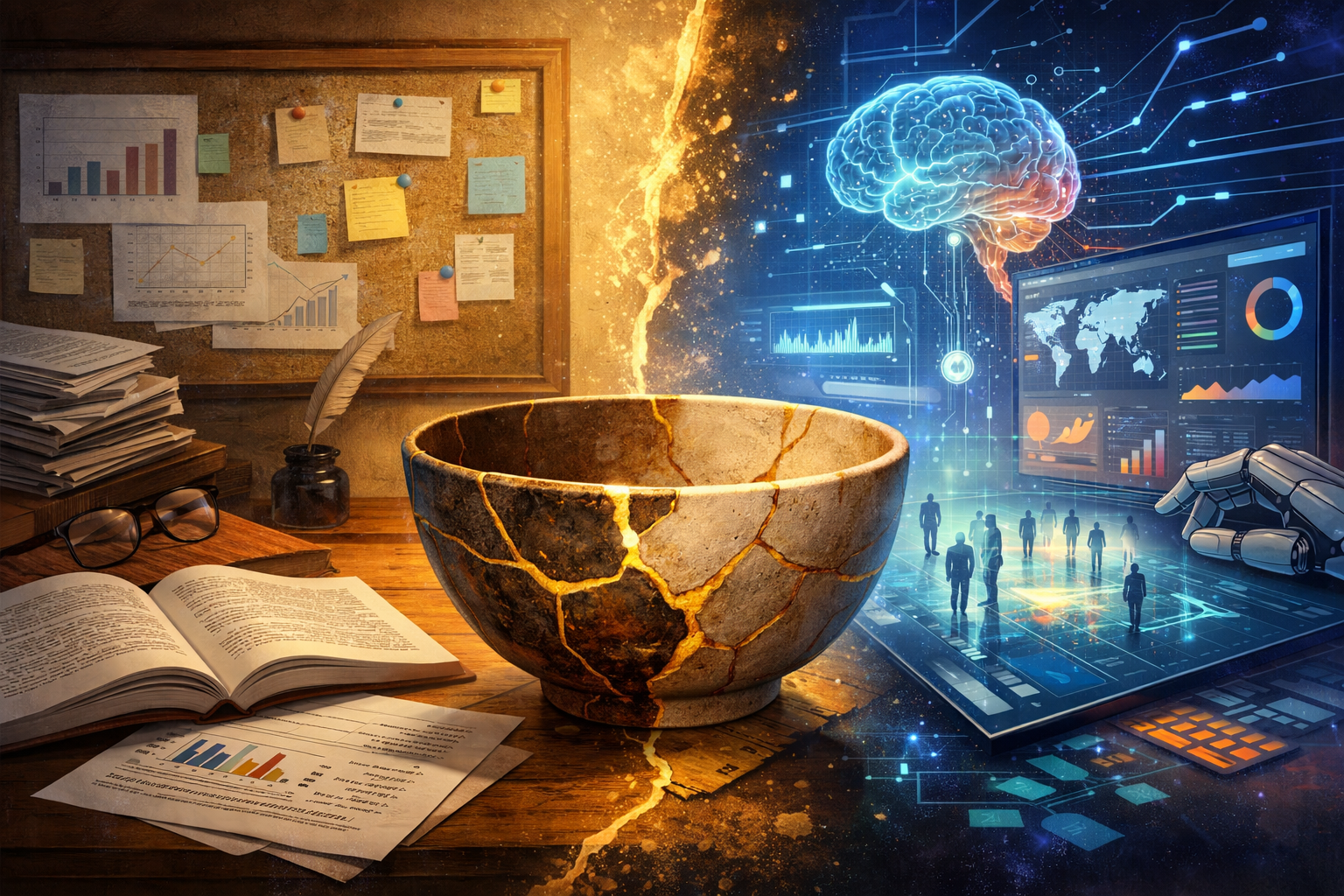

Like Kintsugi pottery, researchers can spend hours breaking perfectly adequate AI-made code and gluing it back together with the golden nuggets of our imagination.

Who among us has not spent endless hours crafting a sentence in an article revision, or making presentation slides absolutely perfect? Does it really matter if we end up doing this over MS Word or VS Code?

Naturally, the costs of producing research will change. But since when is minimizing costs what we are all about?